All training courses are provided in-house and the courses listed below can be mixed to meet your needs.

Please contact us to discuss what courses would best match your requirements and if you have any questions on specific courses or course categories.

This module aids participants with the practical implementation of a Data Governance Strategy.

All GOC Departments are now, at a high level, developing Data Strategies to align with the Privy Council report “A Data Strategy Roadmap for the Federal Public Service”, but how does this affect individual groups within GOC Departments, Agencies and Crown Corporations?

In this module, you will learn the key principles and best practices that drive Data Strategy and how they can be practically implemented in the workforce to provide a positive return on investment through key data practices, including how to:

- identify data needs and strategies for alignment with departmental strategy

- assess, review and address the needs of stakeholders

- be an ongoing champion of data use

- use data to guide decision making

- effectively prepare to share data both internally and externally

- obtain key insights from data

- use data to review and enforce accountability and responsibility

- connect data functions internally across groups and externally with OGD and 3rd parties

A department’s data analyses are only as good as the data that they access. Essentially, data engineering is the creation of data pipelines that enable the data scientists and analysts to do their work properly. As the workplace becomes more data focused, data engineering is moving from its traditional home in the IT department and directly into business lines. This module will give participants and overview of what data engineers do and how critical data pipelines are.

Participants will learn:

- the importance of a well designed data pipeline

- strategies for aggregating and combining data sources

- Treasury Board Secretariat guidelines and milestones in the storage and communication of Protected B (and high level) information

- what tools data Engineers need access to

- approaches to data collection and validation

This module is a pre requisite to obtain the course certification. As part of the certification process, participants are expected to present on a topic they learned through their training and present it to a group for critique and feedback. A mini report and a presentation are required to be submitted for Data Action Lab review and records.

As the focus for openness and transparency grows, the requirement to push more departmental data to the GOC open data portal becomes crucial. This is a time consuming process; it becomes important for departments to set up a streamlined set of workflows and processes to ensure efficiency and data quality. In this module, participants will get an overview of:

- best practices in publishing open data

- how to ensure consistency in publishing open data sets over time

- standards and protocols in publishing open data

- quality assurance of open data

A Key Performance Indicator (KPI) is a performance metric that allows organizations to understand the relative “health” of a department or group by monitoring activity over time. In a data focused workplace, all KPIs need to be well defined and monitored over time. Additionally, with the advent of ever easier to use analysis tools and access to broader data, KPIs that were not available in the past are now measurable. In this module, participants will learn::

- how to develop strategies and frameworks for KPI discovery

- the importance of defining KPI calculations

- identification and management of KPI data sources

- about tools for KPI management

- how to publish KPIs through Business Intelligence Dashboards

This module is designed to compliment the courses in DC-15 Visualizing Performance: Best Practices in Management Dashboard Design.

In this module we interactively walk through a dashboard requirements process including gathering end user and organization requirements, story boarding, and high level design process.

Poor data dashboard design comes from poor requirements analysis. Typically, dashboard design is left to data visualization experts; providing senior management with a list of best practices helps to build dynamic and effective dashboards from the outset. In this module, participants will learn to:

- effectively engage with the end users to properly define the context of the dashboard

- understand the importance of narrative and storyboarding as part of the dashboard design process

- understand what design elements are key to engaging the end user

- what charts to use with specific types of data and storytelling objectives

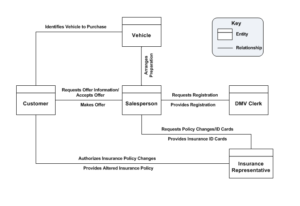

In this module, we look at tools to map the data sources that are utilized by a department, or group within a department, at a high level. This is a critical activity that allows us to set up a number of value add activities, including data management, identification of data relationships as part of data lineage analysis (where data comes from, what happens to it and where it moves over time), and discovery of hidden sensitive data and consolidation of multiple data sources.

Aimed at non technical managers, this module will enable participants to:

- create a high level view of the sources of data that support a group within a department

- understand the inter relationships between the data sources

- estimate the level of risk associated with the group data management approach

- align data requirements to group business requirements

- visit data analysis approaches that allow a deeper understanding of their data

All groups within GOC Departments and Agencies rely (to a degree) on rogue data sources (sometimes called black books). These are typically composed of Microsoft Excel spreadsheets compromised of copied and adjusted enterprise data, home grown Access databases, or data stored in specific analysis applications. In this module, we identify strategies for:

- performing an audit to create a list of black books

- understanding the forces driving their creation and ongoing maintenance

- reducing their number and relative importance

- migrating to more robust enterprise data sources

This module uses the information provided in DC 1 Developing a Data Strategy Roadmap and DC 2 Developing a Data Governance Strategy and walks participants through a pre defined checklist to assess where their Department, Group or Line of Business finds itself in relation to GOC and Departmental expectations.

Upon completion, the participants will have an understanding of where their GAPs are and have the ability to perform a more comprehensive analysis to come up with an action plan to close the GAPs.

The first of two modules providing an overview of standard software tools. This module looks at self service tools including (other tools may be included at the discretion of Data Action Lab or by request):

- Code based analysis tools

- Data visualization and Business Intelligence tools

- Data engineering tools

- Simulation and symbolic mathematical tools

- Statistical packages

In a dynamically changing workplace, it is difficult to understand what new and changing roles are required to build a data focused workplace. in this module, we outline some of the key new roles that may be required and what competencies each role requires. This will aid in the selection, hiring, development, and training of data employees, helping to provide maximum benefit to the organization and growth opportunities for the employees.

We cover several data roles, and take a closer look at competencies, roles and responsibilities for:

- domain experts

- data translators

- data engineers

- data scientists

- analysts (data and business)

- computer scientists

- computer engineers

- AI/ML QC specialists

The third and final module in our Artificial intelligence (AI) and Machine Learning (ML) project series. in this module, participants are presented with a series of case studies which are analyzed to derive lessons learned and best practices. The module also provides an opportunity for participants to present information on their own projects for the group to review and provide feedback.

This is the second of three modules on running Artificial intelligence (AI) and Machine Learning (ML) projects. in this module, you will learn:

- how to understand AI/ML infrastructure requirements

- AI/ML techniques trade off in relation to project requirements

- initial and ongoing risk management

- model management and prioritization

- quality control and assurance activities

- ongoing system management and maintenance

This is the first of three modules on running Artificial intelligence (AI) and Machine Learning (ML) projects. Compared to regular projects, these project categories typically require a different approach to set up and run. Due to the significant amount of discovery required in AI/ML applications, standard project management frameworks such as PMBoK and Prince2 (or similar) phased approach usually need to be modified to ensure project success. in the first of these modules, you will learn:

- what makes AI/ML projects different

- how to define requirements for AI/ML projects

- the importance of research and discovery as managed phases of project implementation

- new skills required of project managers

- critical roles in the implementation of AI/ML projects

This module investigates methods to link the results of data analysis with departmental or group objectives. The standard is often “analysis for the sake of analysis”. In this module, we show how to build an analysis framework that aligns with departmental and group objectives. Participants will learn:

- how to precisely articulate business objectives

- to identify what type of analysis will measure the business objective

- how do define what data is required to build the analysis models

- how to monitor the analysis over time and make the linkages between analytics and objectives

- how to take analysis results and make meaningful recommendations

This module provides tips and approaches to help empower a data focused workplace. It is not sufficient to rely on a small number of individuals to manage and use data – all employees need to understand their role in the creation, analysis, dissemination and curation of organizational data. in this module, you will learn how to:

- create a data based decision culture where the fundamental objectives are collection, analysis, and deployment of data to make better and more informed decisions

- understand the tools that allow the workforce to enhance their analysis and decision making skills

- understand what specific data training & development is required to up skill the workforce

- recognize the data competencies that are required to run a standard data workplace

- establish the importance of democratizing data, or how to get the right data in front of the right people at the right time

- deploy dynamic risk management in everyday data driven decision making

- find and empower your organization’s data evangelists

- build a reliable and consistent data management process

In the recent Privy Council report “A Data Strategy Roadmap for the Federal Public Service”, a roadmap was laid out for GOC Departments to create Data Strategy and Governance frameworks and to implement them through a robust Data Strategy Roadmap.

This module investigates the impact this has on GOC Departments, and, more importantly, on the groups and lines of business within those Departments.

We provide guidance and recommendations derived from widespread best practices on how Senior Executives and Managers can design and implement activities to:

- create an internal roadmap to implement Departmental Strategy and Data Governance

- establish a decision making group to enforce departmental mandates including accountabilities, roles and responsibilities

- identify key roles and responsibilities around data leadership

- understand ethical and secure use of data

- assess the current state of data literacy, skills and competencies

- establish good hiring practices

- review and update data training and development strategies

- understand departmental policy frameworks and how they affect the group / line of business

- assess infrastructure needs

- help foster innovation through pilot projects

- develop a data quality framework

Some fundamental ideas and perspectives apply to any kind of data practice. This course introduces learners to these fundamentals by providing case studies in data analysis and machine learning, presenting, in broad strokes, the underlying concepts that support data-related work and leaving them with some thought-provoking questions on these topics to ponder and discuss. The main goal of this course is to ensure that learners start their data practitioner or data scientist journey in the right direction.

Data analysis can’t happen without data, and that data must come from somewhere. Data collection and and data processing typically take up the bulk of the time spent on any data project; how well this is accomplished carries through to the end of the project.

In this course, we survey the options for cleaning and transforming the data in order to make it more suitable for analysis.

This course is where the “rubber hits the road” and where learners start to work with data in a hands-on fashion. Its focus is on putting into practice, in a preliminary and basic way, many of the topics used in data analysis: data visualization, data exploration, data wrangling, and data modeling. This is done via basic analyses of data: summaries of variables, simple calculations related to these summaries, exploring subsets of the data, and gaining some basic insights into the information contained in datasets.

Devising well-constructed strategies to measure the properties of key objects of interest, and then combining these measures into more abstract metrics that relate to research hypotheses or organization goals is an important component of data analysis. Measures and metrics are typically very specific to a domain or problem of interest, but case studies in one domain can provide ideas and best practices that are applicable to others. In this course, in addition to considering some of the fundamental concepts relating to these topics, learners are provided with several case studies of measures and metric development.

Data and knowledge architecture concepts are critical in designing a data-driven project, and there have been a number of new and important developments on that front in recent years (e.g. NoSQL, graph databases, data lakes). The high-level goal of this course is to expose learners to many of the concepts and terms at play in today’s data world and to help them to decide which of these is most applicable to their particular data projects.

Once the dataset has been successfully explored, described, and cleaned, analysts are in a position to use it to do more than just understand what has already happened. With enough of the right kind of data, they may also be able to predict novel or future occurrences. Predictive analysis has traditionally relied on regression as its technique of choice. New types and increasing amounts of data have led to the recent development of new techniques in this area, including time series analysis techniques and machine learning techniques, such as neural networks. This course gives an overview of these prominent prediction techniques, which provides learners with a starting point from which to begin developing predictive analysis skills.

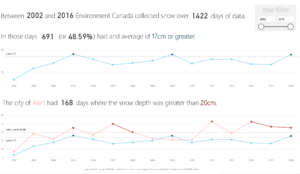

Prior to running analyses, it is crucial to gain an understanding of the dataset and how it is behaving. This process starts while cleaning the data. Increasingly sophisticated visualization strategies can play an important role at this stage, as well as at the end of the data analysis process, when insights are presented to others. This course presents simple methods to visualize data, with R/Python examples.

Prior to running analyses, it is crucial to gain an understanding of the dataset and how it is behaving. This process starts while cleaning the data. Increasingly sophisticated visualization strategies can play an important role at this stage, as well as at the end of the data analysis process, when insights are presented to others. This course presents mutlivariate methods to visualize data, with R/Python examples, and design suggestions to generate engaging visualizations.

This lab is designed for participants to cement their data visualization skills and to develop competencies. In a collaborative environment, participants can bring along their own data set or use an example data set provided by Data Action Lab. Visualizations will be created and constructively criticized by all attendees.

This lab is software tool agnostic, typically attendees create visualizations in Excel, Power BI, SAS, R, Python or any other tool of their choice.

Poorly designed visualizations (graphs, reports, charts, slides etc.) can lead to confusion and in the worst case erroneous business decisions. End users are constantly seeking the best ways to understand the data behind the data. The most effective way to help end users is by making it visual for them. This module is aimed at taking participants through the basics of data visualization and design whether you are creating Power BI interactive reports, generating charts in Excel or management presentations in PowerPoint. This module will help you to:

- Effectively engage with the end users to properly define reporting context

- Understand the importance of narrative and storyboarding as part of the design process

- Understand what design elements engage inconic, short and long term memory

- Matching visualizations to data, including best practices and implementation hacks (Excel and Power BI) for:

- Interactive text, Data tables, Data table heatmaps, Scatterplots and bubble plots, Line charts, Bar Charts (Vertical & Horizontal), Stacked Bar Charts (Vertical & Horizontal), 100% Bar Charts (Vertical & Horizontal), Area Charts, Waterfall Charts, Treemaps, Funnel Charts, Key Performance Indicator Gauges, Data Geographical Maps and Choropleth Maps

- Charts and visualizations to avoid

- Fully understand the basic rules of Design and Layout including:

- Gestalt Principles, Pre-attentive Attributes, Decluttering your charts, dashboards and reports, Size and positioning, Basic colour rules and introduction to colour wheel calculations

Data visualizations can be used for reporting endeavours in a variety of manners, but they can also be used to explore data and set the stage for more in-depth analysis, and for insight extraction. In this module, participants will

- Learn about the different roles of data visualization in the data analysis process.

- Increase their understanding of how to represent simultaneously multiple dimensions.

- Learn some of the strategies and considerations used to create good post-analysis visualizations.

- Learn the difference between a visualization and an infographic.

- Improve their judgment about the quality of data visualizations.

In particular, participants will study the use of data visualizations for

- Detecting anomalous and invalid entries

- Shaping data transformations

- Getting a sense for the data

- Identifying hidden data structure

They will also study the fundamental principles of analytical design and learn how to recognize their application in a number of case studies, Finally, they will study the grammar of graphics.

From John Snows cholera map, through the Nightingale military mortality Rose Charts to Minard’s March to Moscow, history is replete with amazing examples of data visualization that we can take lessons from and apply to our work today.

This course guides participants through a number of history changing visualizations and the group will break down the key elements of each one to come up with a list of best practices, concepts and approaches that can be applied in the environment today using new tools and techniques.

This two-week bootcamp will take you from not knowing anything about computer programming (a ‘Noob’ in the programming world) to starting to self-learn with confidence. The first week will focus on an overview of programming practices and strategies, along with a discussion of other computer concepts relevant to programming: types of programming languages and programmers, programming best practices, programming theory and practice, operating systems and file systems, internet and cloud protocols. There will be hands-on exercises in the first week, but they will not require participants to write code from scratch. The second week will be a series of labs where participants learn to write a series of simple programs using both Python (as an example of a general programming language) and R (as an example of a specialty programming language, focusing on data manipulation, analysis and visualization), culminating in a cap-stone simple web-app project.

Hot on the heels of the data science explosion, data translators are becoming the next big in-demand role in data-driven projects. But what is a data translator, and what do you need to know to become one? This one-day workshop will introduce you to the data translator role, which focuses on helping data scientists and business stakeholders communicate with each other. The workshop will also review the fundamental concepts and areas of expertise with which all data translators must be familiar, including: data science, data engineering, business intelligence and data management. The workshop will conclude by reviewing the typical parts and processes of data science projects, and explore the role of the data translator at each stage.

Data analysis can only occur within the context of a supporting data architecture. Although the technological underpinnings and exact construction of such architectures can be vary, there are design principals that can be defined and described across technologies. This half-day workshop introduces a number of different high level data and information architectures, including databases, data lakes, ontogies, NoSQL and graph databases. Within the context of these architectures, the module also discusses, more generally what it means to structure data, and why choosing the correct data structure is essential in enabling the intended use of your data.

Before data can be used for increased awareness, decision support and knowledge discovery, it must be transformed from its raw state into one that is valid, usable and applicable to a particular problem. This half-day workshop provides a first pass look at some of the many different elements of data processing: data transformation, data validation, data cleaning and dealing with missing data, as well as some of the typical considerations and issues that arise at each of these data processing stages.

The French idiomatic expression “l’habit ne fait pas le moine” cautions analysts and data consumers alike not to fall into the trap laid by pretty pictures: content is more important than style. In a world where stakeholder buy-in and data storytelling is becoming increasingly important, however, there is no denying that great content and good visuals provide a significant upgrade on great content alone. In this course, we discuss the grammar of graphics (as implemented in the R tidyverse package ggplot2).

After having spent some time on data preparation, data cleaning, data exploration, and on a survey of the basics of programming, as well as on mathematical and statistical preliminaries, learners are now ready to discuss the general tasks and problems of Statistical Learning (also called Machine Learning). In this course, learners are additionally introduced to their first (unsupervised) learning task: association rules mining.

When it comes to using mathematical and statistical language and formalism, supervised learning slots into a role akin to that played by physics: complex, yes, but quite well-suited to the language and with a long history of applications. Unsupervised learning (such as clustering) is more similar to biology: it has not been studied with the same formalism and to the same extent, because it is, quite simply, harder to do so (not in the sense that the algorithms are too complicated, but in the sense that their results are harder to validate). Interest in those methods is increasing, however. In this MCT, we discuss the basics of clustering and tackle some of its issues and challenges. We also introduce k-Means, hierarchical clustering, and discuss clustering validation.

Supervised learning tends to be easier to set-up than unsupervised learning, given the general clarity of the questions that are tackled and the ease with which we can evaluate a model’s performance. In some sense, classification and value estimation (regression) are the quintessential machine learning tasks; more so than unsupervised learning methods. In this course, we discuss the basics of classification, and some its issues and challenges. We also introduce decision trees, naïve Bayes classifiers, and discuss performance evaluation.

The methods of supervised and unsupervised learning can be applied to data that arises in non-numeric or non-categorical formats, such as text or images. The challenge there is often to find a way to transform the unstructured data into structured objects that can be fed into classification or clustering algorithms, say. In this course, we discuss the intricacies of preparing text data for analysis.

The methods of supervised and unsupervised learning can be applied to data that arises in non-numeric or non-categorical formats, such as text or images. The challenge there is often to find a way to transform the unstructured data into structured objects that can be fed into classification or clustering algorithms, say. In this course, we discuss text classification and sentiment analysis.

The methods of supervised and unsupervised learning can be applied to data that arises in non-numeric or non-categorical formats, such as text or images. The challenge there is often to find a way to transform the unstructured data into structured objects that can be fed into classification or clustering algorithms, say. In this course, we discuss the myriads way text data can be visualized.

The methods of supervised and unsupervised learning can be applied to data that arises in non-numeric or non-categorical formats, such as text or images. The challenge there is often to find a way to transform the unstructured data into structured objects that can be fed into classification or clustering algorithms, say. In this course, we discuss the basics of natural language processing.

The methods of supervised and unsupervised learning can be applied to data that arises in non-numeric or non-categorical formats, such as text or images. The challenge there is often to find a way to transform the unstructured data into structured objects that can be fed into classification or clustering algorithms, say. In this course, we discuss other applications of natural language processing: named-entity recognition, semantic parsing, summarization, etc.

The methods of supervised and unsupervised learning can be applied to data that arises in non-numeric or non-categorical formats, such as text or images. The challenge there is often to find a way to transform the unstructured data into structured objects that can be fed into classification or clustering algorithms, say. In this course, we discuss topic modeling.

To be comfortable with machine learning, learners need some knowledge of a number of mathematical disciplines, including statistics, linear algebra, multi-variable calculus, and mathematical modelling. This course provides learners with a high-level survey of some of the fundamental concepts of these disciplines, focusing on a few key topics in each. The goal of the course is to orient the learner towards these disciplines and concepts, to allow for future in-depth learning.

This half-day workshop provides participants with an introduction to:

- The foundational components of AI and ML.

- Business functions (processes, etc.) typically involved in AI/ML projects.

- Hardware and software requirements and resources needed for AI/ML projects (staff, equipment, expertise), including some discussion of API supporting technologies and data.

Where possible, relevant government examples will be used to illustrate these topics, and during the workshop there will be additional discussion with workshop attendees about where AI could usefully be applied in their work contexts.

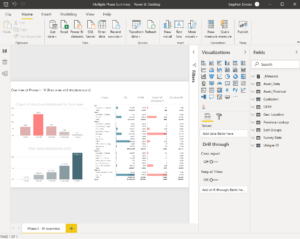

The Data Actional Lab holds a series of Data Labs on various topics. The Data Lab on the third week of each month is dedicated to GOC Power BI users. Each month is different but typically participants bring along problems for the group to solve. To attend the ongoing Data Labs a monthly attendance fee is required. The payment of this fee allows the organization to send up to five participants to any of the labs through the month. For more details please see the Data Action Lab website

There are many online resources to learn Power BI (for example the Microsoft / EDX course). Unfortunately, GOC employees are not always able to access these courses at their desk or at home.

To address this issues Data Action Lab hosts interactive learning environment where participants can work through the on-line learning in a supervised environment. The learning is self paced and a technical expert is on hand to help the learner through any issues. This module can be delivered in house but there is a minimum commitment of three months once per week

In this module we will work with participants to integrate both R and Python script into Power BI. A high-level understanding of either R or Python is required but the course presenter will walk participants through some basic R script and/or Python. If this course is purchased as an in-house module we can concentrate specifically on R or Python or both at the discretion of the client. In this course participants will learn how to:

- Embed R script to import data in Power BI

- Embed Python to import data in Power BI

- Combining imported data into existing Power BI data model

- Using R script and/or Python to create interactive visuals in Power BI

This module is designed to look in more depth in data modeling in Power BI. Excel users are very used to data presented as a flat file (a tab or table) but to extract the greatest value from Power BI we need to look at our data from a dynamic, relational perspective. In this course we will:

- Learn in detail what makes a good data model

- Understand how to optimize data models for data visualization

- Where to use cross reference tables

- How to create dynamic cross reference tables that adjust to changing input data

- How to optimize data models for large data sets

This module is aimed at taking participants past their first chart creation in Power BI and to shortcut the user to some relatively sophisticated visualizations that are not technically difficult to create. The module will help you to:

- Get a head start on Data Modeling

- Get up to speed in DAX – effective and easy top tips

- Get up to speed in M – effective and easy top tips

- How to overcome common data analysis issues

- Useful intermediate Power BI “hacks”

- Introduction to non-standard charts

This module is aimed at taking participants through the initial stages of inputting data and creating their first interactive charts, reports and visualizations. This module will help you to:

- Understand the importance of clean data

- How to import data from single and multiple data sources

- Manipulating the data as it is imported into Power BI

- Creating our first calculations

- Creating our first custom columns

- Creating our first charts

- Basic interactive filtering

- Creating basic hierarchies of data

- How to layout a report and dashboard

- How to publish reports and dashboards to end users

This module is designed for the absolute Power BI beginner. We walk the participants through the Power BI basics including:

- How Power BI fits into a larger software framework

- Where, and where not to use Power BI

- Excel vs Power BI – pros and cons

- A Power BI walkthrough

- Power Query (with a brief overview of M)

- Data Modeling view

- Data layout view

- Data visualization view

- What is DAX and how it is used

- How to publish with Power BI

At the end of this session participants should be comfortable with what Power BI should be used for and have the ability to open a simple data set and create some simple visualizations

Programming languages go in and out of style. To be a strong programmer, it’s important to understand not just the ins and outs of a particular programming language, but how computer languages and computing infrastructure work more generally. In this course, learners are introduced to some of the core concepts of computer programming, in a language-agnostic way.

The French idiomatic expression “l’habit ne fait pas le moine” cautions analysts and data consumers alike not to fall into the trap laid by pretty pictures: content is more important than style. In a world where stakeholder buy-in and data storytelling is becoming increasingly important, however, there is no denying that great content and good visuals provide a significant upgrade on great content alone. In this course, we introduce dashboards and data storytelling.

Data analysis can’t happen without data, and that data must come from somewhere. Data collection and and data processing typically take up the bulk of the time spent on any data project; how well this is accomplished carries through to the end of the project. In this course, we consider the main elements required to succeed in data collection, as well as the many ways this activity can go awry.

This full-day in-person, hands-on workshop provides participants with information on using R for Data Science. It is intended for people who already have a programming background. The workshop is structured as follows:

Morning – Theory:

Review of R as a programming language:

History and comparison with other languages (e.g. Python)

Programming environment options – R Studio, R Markdown

Overview of key packages: Statistical Packages, Machine Learning Packages, TidyVerse (data processing + visualization), Shiny

Programming basics – conceptual overview

Brief Review of Relevant Statistical Concepts:

Descriptive Statistics

Statistical Modelling

Comparison between Statistics and Machine Learning

Afternoon – Hands-On:

Quick hands-on walk through of R command-line and R-Studio (e.g. running scripts, installing packages)

Getting into R program – with Pre-Worked Examples in R-Markdown

Practice Exercises and Lab Time

Data science tasks break down when the datasets become too large. Throw enough time and money at this specific problem and it will eventually evaporate. But what can one achieve on a budget? In this course, participants will learn to tackle simple Big Data problems using Spark and H20.

Data mining is the collection of processes by which we can extract useful insights from data. Inherent in this definition is the idea of data reduction: useful insights (whether in the form of summaries, sentiment analyses, etc.) ought to be “smaller” and “more organized” than the original raw data. The challenges presented by high data dimensionality (the so-called curse of dimensionality) must be addressed in order to achieve insightful and interpretable analytical results. In this course, we introduce the basic principles of dimensionality reduction and a number of feature selection methods (filter, wrapper, regularization), discuss some advanced topics (SVD, spectral feature selection, UMAP and other topological reduction methods), with examples.

Bayesian analysis is sometimes maligned by data analysts, due in part to the perceived element of arbitrariness associated with the selection of a meaningful prior distribution for a specific problem and the (former) difficulties involved with producing posterior distributions for all but the simplest situations. On the other hand, we have heard it said that “while classical data analysts need a large bag of clever tricks to unleash on their data, Bayesians only ever really need one.” With the advent of efficient numerical samplers, modern data analysts cannot shy away from adding the Bayesian arrow to their quiver. In this course, we will introduce the basic concepts underpinning Bayesian analysis, and present a small number of examples that illustrate the strengths of the approach.

With the advent of automatic data collection, it is now possible to store and process large troves of data. There are technical issues associated to massive data sets, such as the speed and efficiency of analytical methods, but there are also problems related to the detection of anomalous observations and the analysis of outliers. Extreme and irregular values behave very differently from the majority of observations. For instance, they can represent criminal attacks, fraud attempts, targeted attacks, or data collection errors. As a result, anomaly detection and outlier analysis play a crucial role in cybersecurity, quality control, etc. The (potentially) heavy human price and technical consequences related to the presence of such observations go a long way towards explaining why the topic has attracted attention in recent years. In this course, we will review various detection methods, and provide a comparative analysis of algorithms (performance, limitations), illustrated with the help of some practical examples, and with particular attention paid to supervised and unsupervised methods.